If you’ve read my blogs before or seen me speak you’ll know that I love the DevOps.

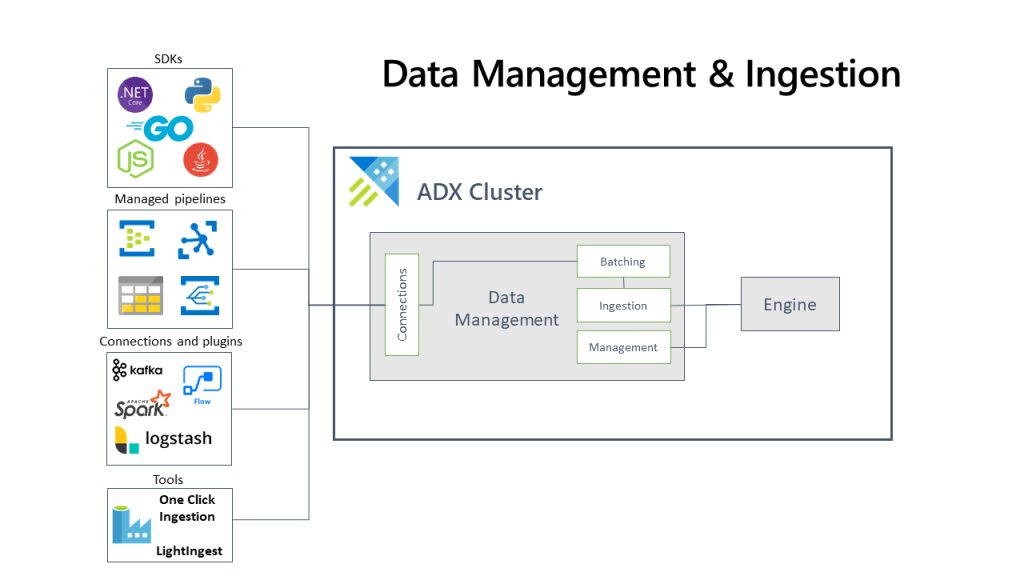

Provisioning Azure Data Explorer can be a complex task, involving multiple steps and configurations. However, with the help of DevOps methodologies like infrastructure as code we can spin up an Azure Data Explorer cluster in no time.

I like to use terraform, which is an open-source infrastructure as code tool, which we can automate the entire provisioning process, making it faster, more reliable, and less error-prone.

In this blog post, I discuss how to use Terraform to provision Azure Data Explorer, step-by-step.

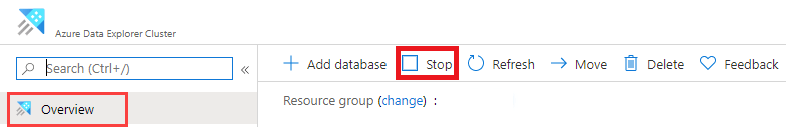

Step 1: Set up your environment Before you can begin using Terraform to provision Azure Data Explorer, you need to set up your environment. This involves installing the Azure CLI, Terraform, and other necessary tools. You’ll also need to create an Azure account and set up authentication.

Step 2: Define your infrastructure as code Once you have your environment set up, you can begin defining your infrastructure as code using Terraform. This involves writing code that defines the resources you want to provision, such as Azure Data Explorer clusters, databases, and data ingestion rules.

Step 3: Initialize your Terraform project After defining your infrastructure as code, you need to initialize your Terraform project by running the ‘terraform init’ command. This will download any necessary plugins and modules and prepare your project for deployment.

Step 4: Deploy your infrastructure Now that your Terraform project is initialized, you can deploy your infrastructure by running the ‘terraform apply’ command. This will provision all the resources defined in your code and configure them according to your specifications.

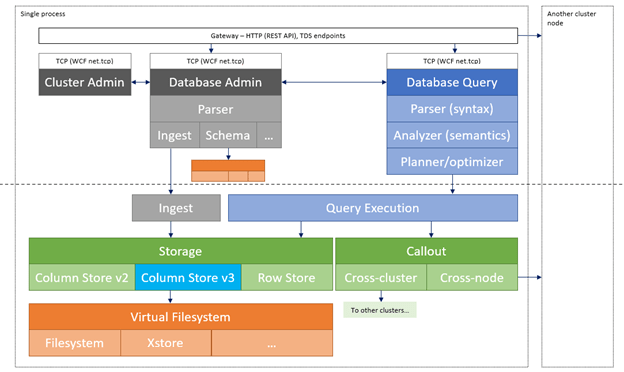

Step 5: Test and monitor your deployment Once your infrastructure is deployed, you should test and monitor it to ensure it is working as expected. This may involve running queries on your Azure Data Explorer cluster, monitoring data ingestion rates, and analyzing performance metrics.

provider "azurerm" {

features {}

}

resource "azurerm_resource_group" "example" {

name = "my-resource-group"

location = "West US 2"

}

resource "azurerm_kusto_cluster" "example" {

name = "my-kusto-cluster"

location = azurerm_resource_group.example.location

resource_group_name = azurerm_resource_group.example.name

sku = "D13_v2"

capacity = 2

}

resource "azurerm_kusto_database" "example" {

name = "my-kusto-database"

resource_group_name = azurerm_resource_group.example.name

cluster_name = azurerm_kusto_cluster.example.name

}

In this code, we first declare the Azure provider and define a resource group. We then define an Azure Data Explorer cluster using the azurerm_kusto_cluster resource, specifying the name, location, resource group, SKU, and capacity. Finally, we define a database using the azurerm_kusto_database resource, specifying the name, resource group, and the name of the cluster it belongs to.

Once you have this code in a .tf file, you can use the Terraform CLI to initialize your project, authenticate with Azure, and deploy your infrastructure

Using Terraform to provision Azure Data Explorer offers many benefits, including faster deployment times, greater reliability, and improved scalability. By automating the provisioning process, you can focus on more important tasks, such as analyzing your data and gaining insights into your business.

Terraform is a powerful tool that can simplify the process of provisioning Azure Data Explorer. By following the steps outlined in this blog post, you can quickly and easily set up a scalable and efficient data analytics solution that can handle even the largest data volumes.

Yip.